According to cybersecurity analytics firm RedSeal, security executives are looking for better ways to measure cybersecurity investments. As CEOs plan to increase cybersecurity spending by as much as 84 percent, 87 percent of CEOs state that they need a better way to measure the effectiveness of those investments.

Security teams want to invest in mobile app security testing and also demonstrate the value mobile app security assessments bring to the enterprise as a whole. Tracking and communicating data about the risk the security team is reducing as a result of testing is a good way to do so.

In our Evaluation Guide for Mobile App Security Testing, we identified key criteria for evaluating mobile app security testing tools that also provide a baseline for metrics that measure your progress toward your objectives. These metrics should be clear and easily accessed and understood by both your team and company leadership. Below is a summary of those criteria and how they can help you identify metrics to track the success of your investments in testing.

Things to think about when identifying metrics

Reporting: The structure and contents of reports will affect the metrics you can track and communicate and the ease with which you can do so.

Process: Metrics should align with each stage of the SDLC so that you can measure the vulnerabilities identified and time-to-fix at each stage, for example.

Coverage: Metrics need to reflect the proportion of code tested by you and your team. Teams should aim to achieve a combination of accuracy and depth as they identify flaws and vulnerabilities.

Reporting metrics: Correlate risk ratings to ensure you are eliminating vulnerabilities that matter

To quantify the risk resulting from mobile app vulnerabilities and code defects, organization leaders and security teams should look for tools that incorporate open-source risk-scoring standards and guidelines. The following standards help security teams report on the amount of risk they’ve reduced as a result of remediating flaws and security vulnerabilities:

- Common Vulnerability Scoring System (CVSS): CVSS scores, numbered from 0 to 10, allow teams to properly rate vulnerabilities and determine urgency of response.

- Common Weakness Enumeration (CWE): CWE offers a unified, measurable set of software weaknesses that can be applied to mobile apps. By identifying these vulnerabilities, you can properly measure the number of vulnerabilities present in an app and how long it takes to fix them.

- National Information Assurance Partnership (NIAP) requirements: These requirements from NIAP offer an achievable and repeatable set of standards to use for evaluating mobile apps for vulnerabilities. A recent report on mobile device security from the Department of Homeland Security (DHS) mentions that more government agencies need to adopt app vetting criteria such as the NIAP requirements. Enterprises can also benefit from NIAP’s mobile app vetting guidelines to assess the security status of their mobile apps and ensure they meet the requirements.

- OWASP MASVS Risks: This security standard from OWASP serves as a framework for identifying mobile-specific risks. Each category offers a risk summary and exploit information.

Example goals and metrics:

- Flaws identified and fixed along with severity: By using a standard like CVSS, teams can categorize flaws and vulnerabilities into low, medium, and high risk groups to fix the highest severity flaws first. Teams should report on the number of flaws fixed in each risk group.

- Number of OWASP MASVS Risks identified and fixed: By evaluating mobile apps for flaws and vulnerabilities in 10 distinct categories, security teams can report on successfully reducing the flaws in each risk category.

- Reduce the number of flaws associated with NIAP and CWE standards: Security teams can map flaws to NIAP and CWE to report on the reduction of those known vulnerabilities.

Process metrics: Improve code quality and remove vulnerabilities as early as possible

By improving code quality, you and your team can ensure that flaws aren’t created as an app is coded. One of the best ways to improve code quality is to educate developers, QA managers, and security professionals about best practices for secure mobile development. These best practices should be kept up-to-date and potentially be supported by regular training or workshops.

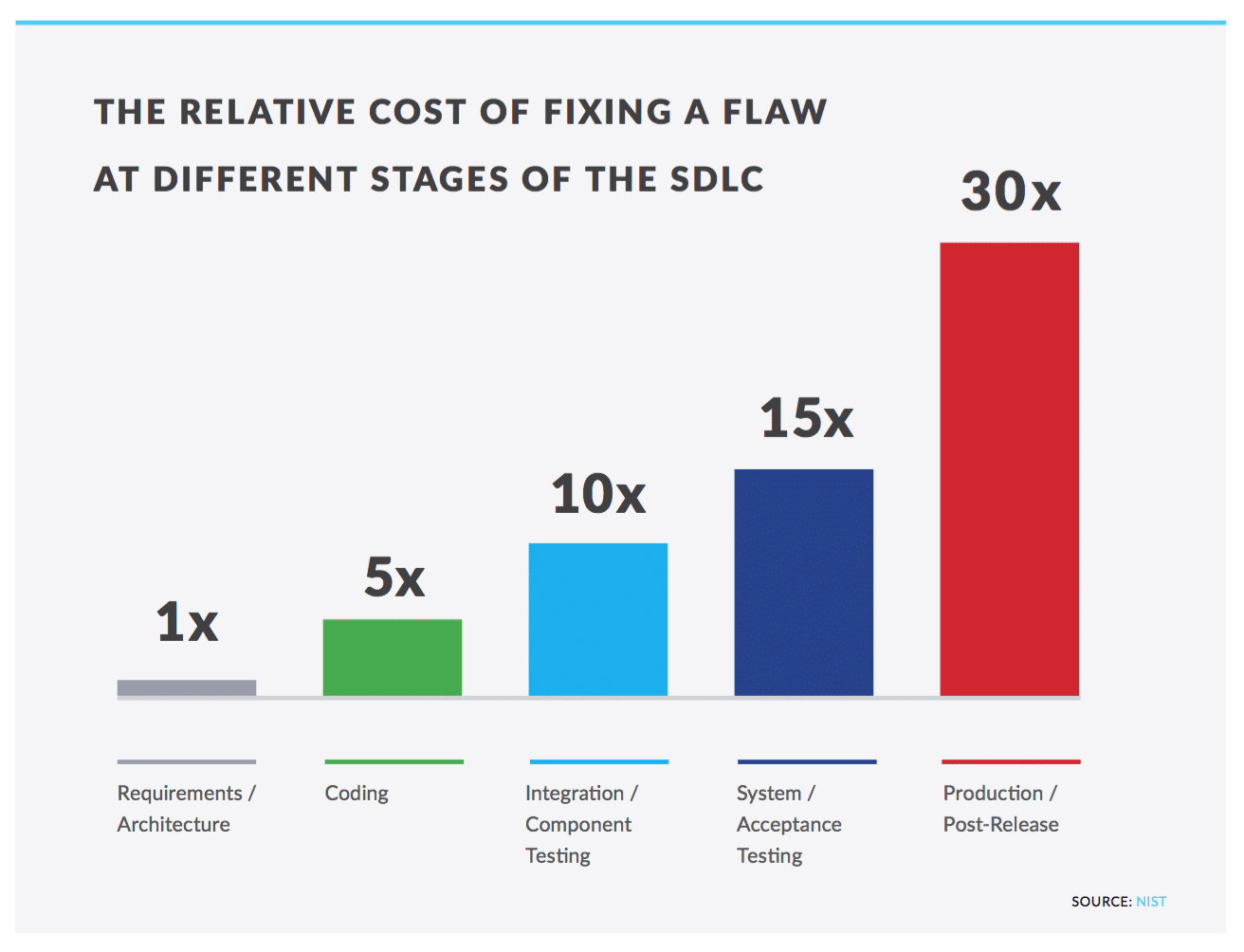

According to the National Institute of Science and Technology (NIST), the cost to fix a flaw in the production stage of the SDLC is approximately 30 times greater than fixing it during the requirements stage. In order to drive down the cost to fix flaws, companies should look to fix flaws earlier in the SDLC and report on timely remediation. If you are looking for a place to start, you can identify the number of flaws found in production and then work to reduce that number by aiming to find those flaws in earlier stages of the SDLC.

Example goals and metrics:

- Measure the number of flaws that violate your development requirements: By creating best practices and keeping them up-to-date, security teams can support secure development by referencing those standards when communicating with their development and QA teams. Track the number of security flaws that violate your internal best practices and track that number over time.

- Measure the stage where flaws are identified and how quickly flaws are fixed: Enterprises should aim to fix flaws earlier in the SDLC. Fixing a flaw just one stage earlier in the SDLC can reduce costs by 15 times or more. Measure the time to fix each vulnerability against how many vulnerabilities are identified at each stage of the SDLC. Adding remediation guidelines to each identified flaw can help developers and QA managers fix security issues more quickly.

Coverage metrics: Avoid false positives while improving testing accuracy and depth

False positives are a recurring challenge facing security leaders as they report on the strengths and weaknesses of their mobile app security testing program. At an FTC Start with Security event in June 2016, a CISO for a global investment company mentioned that the best way to set up a failing security program is to create a large number of false positives and hand them to developers. Such an approach, he explained, will result in spending a quarter of the year “cleaning up the mess” and repairing relationships between security and development.

According to Gartner, enterprises should consider mobile app security testing solutions that perform multiple forms of analysis to increase code coverage and accuracy. By employing multiple types of analysis, you can gather increased context, accuracy, and depth of code coverage. For example, behavioral testing would go far beyond static analysis to not only determine whether a mobile app accesses the device’s contact list, but also determine whether data from the contact list is insecurely transmitted to specific destinations.

Example goals and metrics:

- Reduce false positives: Security teams should track security assessment results and measure the number of correct findings versus false positives. By continually monitoring feedback from other departments at your organization, you can better measure the effectiveness of your mobile app security testing tools.

- Consider a regular, third-party audit: By comparing results from your team against a team of skilled experts, you can improve upon your weaknesses while reinforcing your strengths. The results of your audit can offer a baseline for you and your team every year or even every quarter.

The key to deploying more secure mobile apps is a mobile app security program that sets objectives, measures your progress in achieving them with metrics, and celebrates success among the security and development teams and the enterprise as a whole.