Originally I was going to just post a short note in a forum asking for who is the right person to talk to regarding this topic, but then I realized it’s probably a lot bigger and more involved topic than I thought. I urge the reader to chime in and help resolve some of these challenges.

CVE is well-positioned to play a critical role in tracking the risk level of mobile computing. A “full” risk profile has to take into account not just system flaws but that of the apps a user is running as well. The standards, e.g., CVSS and CWE are excellent tools for articulating severity and types of vulnerabilities systematically. However, CVEs have largely focused on tracking server-side and related flaws and yet the security community has evolved to track client-side vulnerabilities as a critical aspect of dealing with risk. While CVE has worked well for tracking system flaws in mobile operating systems, there remain quirks with regard to mobile app flaws.

I have a couple of questions and thoughts to share with the community that I hope will elicit some response. This content is a little different than the normal technical content in this section of the blog, so please bear with me.

For now I’ll focus on the Android Security community but likely there will be similar questions for iOS.

CVEs for mobile apps

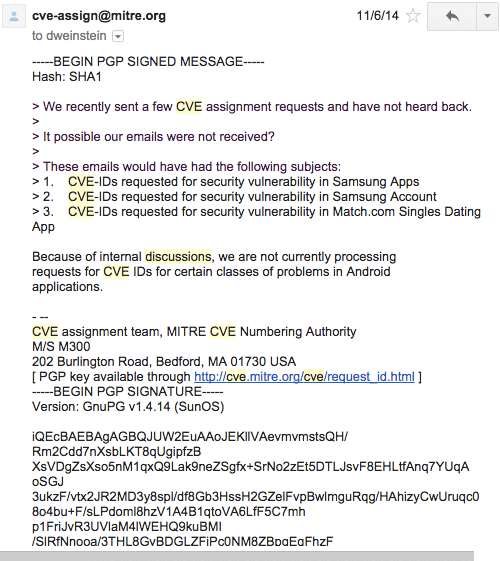

Back in November of last year the message from [email protected] was frustrating at best:

I don’t want to “hate” on MITRE here but some of the responses (or lack thereof) have been really frustrating from a researcher perspective.

Are we all in agreement with using CVEs to track bugs in mobile apps? Lots of work has gone into the definitions, quantitative representation of the vulnerability dimensions, and a lot of support and uptake from industry. But I think we can agree there are some seams showing in the system. I hope to be concrete about this. It seems like using CVE is the “right thing” here but MITRE has been very cryptic (in my opinion) about what qualifies as something that gets a CVE and what doesn’t. These discussions should not happen in some closed door meeting in my opinion, or at least should not end thereI

Is MITRE consistently responding to other researchers’ requests for CVEs? When the bugs are not OS level, but rather faults in a mobile app (that could lead to privilege escalation) these are just as important as system flaws to track. Personally I have experienced app CVEs requests that aren’t addressed in a timely manner and some CVEs that just have never been populated. Attaching a CVE to a vulnerable app, even if it’s an old version, is actually a big part of tracking the reputation of the developer as well!

Who acts as a certified numbering authority (CNA) on behalf of all the app developers on the market? Not all the vulnerabilities our team finds are system level. In fact we’ve found many thousands of problems that each could get a CVE mark. Should Google step in here as a formal body to assist with coordinating CVEs etc for these apps?

Is the process MITRE established designed and prepared to handle the mountain of bugs that will be thrown at it when the community really focuses on this problem as we have? Can the community better crowd source this effort with confirmation of vulnerability reporting in a more scalable and distributed manner that doesn’t place a single entity in a potentially critical path?

Vendors silently fixing vulnerabilities

This has to stop. App developers are probably not prepared to deal with vulnerability notifications… I’ll grant that. I suspect many are just trying to get a organization off the ground and are moving fast. Today, perhaps more than ever however, vendors need to be prepared to deal with security problems in their apps. To that end, when the community shares the vulnerability info with the vendors, please please please don’t silently fix the problem. This is really painful. Researchers would like to be able to create a fully automated test for every vulnerability thatUs discovered and run that in a control loop to test every new version of the app…and that’s something we’re working on…but we aren’t there quite yet. Close but not there yet. Vendors are doing the community a huge disservice when they silently fix a bug we’ve reported to them.

Part of the reason we disclose “Basic App Vulnerabilities” to both users and developers is to make sure there’s an incentive for the developers to fix the problem and let users know it has been fixed. This encourages transparency that would otherwise be difficult to attain. If you’re curious you can see our disclosure policy.

CVE version field

There are a few warts with the way CVE handles some fields that we as a community should address.

Many times the only way to know the mapping for version string to a version code is to have the actual binary to read it from the manifest. Version strings have the property that they are just strings and not everyone uses a semantic versioning like "1.2.3". Personally I’ve seen entire change logs of an app included as the value of a versionName and so that makes the field rather useless for tracking security bugs. Additionally versionCode has the property (at least on Google Play) that they are enforced to be monotonically increasing. This is a hugely important property that can help classify older versions of apps being vulnerable — i.e., anything less than that integer version. Sometimes it’s possible to get the older version directly from Google, but sometimes the app has disappeared.

It would be helpful if Google could share an easy to obtain record of each version of the apps that were published so that the security community doesn’t have to waste time crawling the store. Chances are that last part will happen anyway, but for various other reasons.

Parting ideas about mobile app CVEs

- Every vendor distributing an app on the Play Store should be required to provide a security related contact (e.g., [email protected])

- Google could be a little clearer about when [email protected] should be used for organized disclosure of bugs and consider taking a stronger position in the process.

- CVE should probably be using

versionCodesin addition toversionNamefields to assist with vulnerability tracking - MITRE and/or whoever runs CVE these days should clarify what is appropriate for CVEs so that we know where we should be investing our efforts

- Should we pursue a decentralized CVE request process based on crowdsourcing and reputation?