NowSecure AI-Navigator finds mobile app risks that hide behind the login

Mobile applications use authentication to protect the most

sensitive enterprise and consumer data and critical business

functions from security, privacy, safety and compliance risk.

When testing fails to successfully authenticate, up to 95% of the application, its vulnerabilities, data leaks, supply chain and AI security and governance risks remain hidden.

The mobile app risk gap

Security teams are under pressure to move faster just as mobile apps grow more critical, more complex and AI accelerates development cycles. Manual pen-testing, static testing, or dynamic testing that does not execute user authentication are all problematic.

- Static analysis cannot observe runtime behavior or data in motion

- Unauthenticated testing overlooks the majority of real-world risk

- Authentication flows change frequently and break automated authenticated testing

- Manual configuration and scripting does not scale across releases or an expanding enterprise app portfolio

- Pen testing produces required coverage but can be time consuming

A smarter approach to authenticated mobile testing

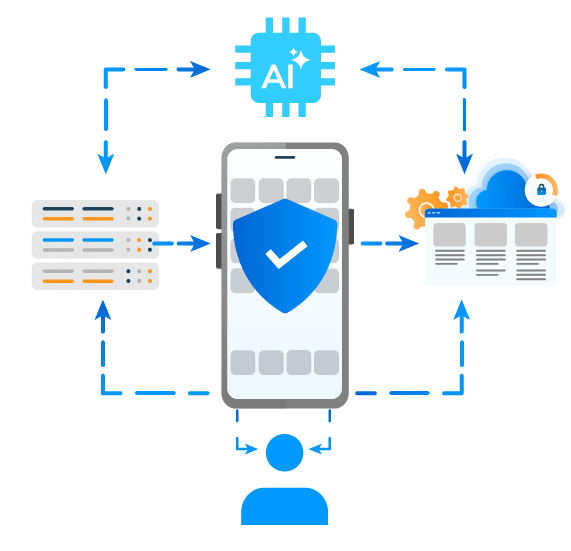

With AI-Navigator, NowSecure enables continuous, automated, authenticated dynamic testing exposing these risks by testing how mobile apps actually behave in production.

By automating login and navigating authenticated workflows, NowSecure enables consistent, repeatable security testing of the areas where data is collected, processed, stored, and shared.

This approach:

- Extends dynamic testing beyond the splash screen and into real user workflows

- Adapts automatically as UI and business logic change

- Eliminates fragile scripts and manual reconfiguration

- Fits naturally into modern DevSecOps and mobile release pipelines

- Tests data in motion for first party code and 3rd party components

Authenticated testing runs entirely within the NowSecure Platform, providing centralized visibility and governance across security, privacy, safety, and compliance. It was built with security in mind – the AI model never has access to any sensitive data or credentials.

For CISOs and risk leaders

CISOs need defensible assurance that mobile risk is understood and controlled. NowSecure enables:

- Visibility into authenticated mobile risk that traditional tools miss

- Continuous validation of security controls as apps evolve

- Stronger audit readiness for privacy, data protection, and AI governance

- Reduced likelihood of high-impact mobile breaches

The outcome is confidence that mobile apps meet enterprise risk, compliance, and safety expectations.

For AppSec and DevSecOps teams

AppSec teams need depth, accuracy, and scalability without operational drag. NowSecure delivers:

- Full dynamic testing after authentication, not just perimeter scans

- Over 90% reduction in authenticated setup and testing time

- Reliable coverage across releases as UI and workflows change

- Unified analytics and reporting across authenticated and unauthenticated testing

Teams can test more apps, more often, without increasing headcount.

For developers

Developers want security that works with delivery, not against it. NowSecure:

- Tests real app behavior without requiring code changes

- Reduces late-cycle security surprises

- Avoids brittle automation that breaks with normal UI updates

- Provides actionable findings tied to real runtime behavior

Security becomes a continuous control, not a release blocker.

Webinar – Find the Risks That Matter Most: AI-Powered Dynamic Authenticated Testing for Mobile Apps

Learn how NowSecure AI-Navigator eliminates the bottleneck. Enter test credentials, click run, and let AI handle the login. No manual configuration. No scripts to maintain. Built for multilingual apps, supporting any spoken language. True self-service that adapts when app UI changes.

How NowSecure enables authenticated coverage

- Automated authentication and navigation

Login workflows are handled automatically, allowing testing to proceed inside authenticated areas. - Dynamic testing in real-world conditions

Vulnerabilities, data leaks, and risky behaviors are identified while the app is running on real devices. - Scriptless, adaptive automation

Testing adapts as the app changes, eliminating ongoing maintenance. - Real device execution

Testing runs on physical Android and iOS devices for accurate detection of platform-specific risks. - Unified platform reporting

All findings roll up into the NowSecure Platform for centralized risk management and reporting.

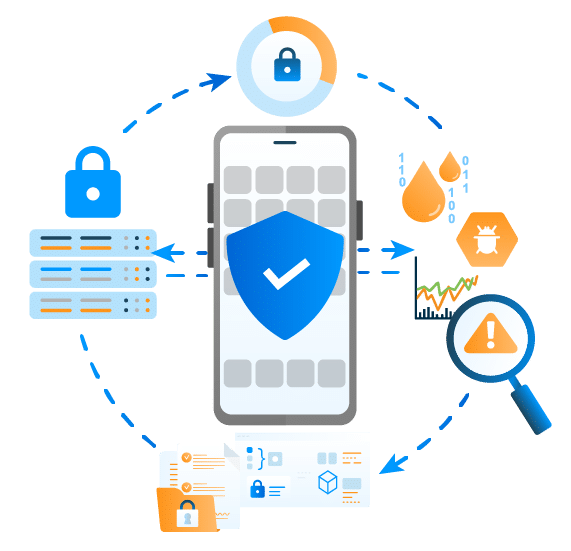

Security and privacy by design

AI-Navigator applies AI in a secure and transparent way.

- All credentials and testing data remain inside the NowSecure environment

- No customer data is shared with AI models

- AI reasoning uses non-sensitive visual and structural UI context in real time

- No credentials or app data are retained or used to train models

This ensures full data protection, auditability, and compliance with enterprise and government requirements.

Why organizations choose NowSecure

NowSecure is purpose-built for mobile application risk management and trusted by enterprises and government agencies worldwide.

Organizations rely on NowSecure for:

- Over a decade of mobile AppSec specialization

- Proven adoption across Fortune 500 and regulated industries

- A unified platform for mobile DAST, SAST, IAST, Pen Testing and API security

- Alignment with OWASP MASVS, NIAP, ADA MASA, and global standards

- Architecture designed for transparency, control, and audit readiness

- AI, Data, Supply Chain and 3rd Party App Security for mobile apps

The Outcome

CISOs gain clarity into authenticated mobile risk.

AppSec teams gain speed and scalable, reliable coverage.

Developers gain security that keeps pace with delivery

Take the next step

See how NowSecure enables authenticated mobile security that reflects real-world risk and real-world use.

Request a demo or register for the upcoming webinar to see AI-Navigator in action.

Mobile AI Governance & Security FAQs

Why is AI governance important and how does it work?

AI governance is important because mobile apps increasingly send personal, proprietary, and regulated data to AI models and endpoints, sometimes without clear visibility or approval.

NowSecure research highlights risks such as privacy-law violations, cross-border data transfer, data leakage, model theft, hardcoded API keys, and unauthorized AI use.

AI governance works by creating policies, discovering AI components, testing runtime behavior, mapping sensitive data flows, prioritizing risk, remediating issues, and preserving evidence for audits, procurement, app stores, and regulators.

In mobile, runtime testing is essential because hidden AI behavior may not appear in code review.

What are the key goals and principles of AI governance?

For mobile apps, NowSecure views AI governance as a program to identify, control, and prove responsible AI use.

Core goals include discovering shadow AI, protecting sensitive data, preventing unauthorized AI integrations, securing AI API keys and models, validating third-party SDK behavior, and maintaining compliance evidence.

Key principles include transparency, least-privilege data access, continuous testing, risk-based prioritization, auditability, and shared ownership across security, privacy, compliance, and engineering.

Governance should cover both AI features intentionally built into apps and AI introduced through SDKs, generated code, APIs, or supply chain components

How do we build an effective AI governance framework in our organization?

At NowSecure, we recommend building AI governance around visibility, policy, testing, ownership, and evidence.

Start by inventorying where AI exists across mobile apps, including AI models, endpoints, SDKs, local files, third-party components, and data flows. Then define policies for approved AI use, sensitive data handling, third-party AI services, licensing, API keys, and regulatory obligations.

Continuous mobile testing should verify what apps actually do at runtime, not just what teams believe is in the code.

NowSecure helps organizations discover hidden AI, prioritize risk, validate controls, and produce governance evidence across first-party and third-party mobile apps.

How can we ensure AI systems are fair, transparent, secure, and free of bias?

NowSecure focuses on the mobile app controls that support trustworthy AI: transparency into AI components, secure data handling, validated disclosures, and continuous testing.

Fairness and bias require model governance, training-data review, and business oversight, but mobile teams must also confirm which AI services are used, what data is collected, where it goes, whether API keys are protected, and whether third-party SDKs introduce hidden AI behavior.

By testing apps dynamically and mapping AI data flows, organizations can make AI use more transparent, reduce security and privacy risk, and provide defensible evidence for governance reviews.

What ROI do security teams get from continuous AI governance testing in mobile apps?

Continuous AI governance testing helps security teams reduce manual review effort, find hidden AI earlier, prevent costly compliance surprises, and prioritize the apps that carry the most risk.

NowSecure enables teams to identify AI models, AI-powered services, SDKs, third-party components, runtime interactions, and sensitive data movement across mobile portfolios.

That visibility lets organizations focus remediation where security, privacy, and compliance impact is highest instead of treating every app equally. Automated, integrated testing also supports faster release schedules while reducing organizational risk and improving audit readiness.

How do we prepare audit evidence for AI governance in mobile apps?

NowSecure recommends preparing audit evidence by documenting AI inventory, data flows, runtime behavior, third-party AI components, API endpoints, local AI files, SDKs, permissions, and remediation history.

Evidence should show which apps use AI, what data AI systems can access or transmit, where that data goes, and whether usage aligns with policy, contractual obligations, app store disclosures, and regulatory requirements.

NowSecure testing gives teams centralized reporting, governance visibility, and repeatable validation across authenticated and unauthenticated workflows, helping organizations demonstrate that AI risk is understood and controlled.

Can NowSecure detect AI endpoints, AI SDKs, and unauthorized data flows in mobile apps?

Yes. NowSecure helps organizations detect AI local files, AI endpoint URLs, AI libraries, cloud-based AI usage, on-device AI usage, and exposed AI API keys in mobile apps.

We also test runtime behavior to identify data collection, transmission, third-party connections, and sensitive data flows that may not be visible through code review alone.

This helps teams uncover shadow AI, identify unauthorized AI services or SDKs, and understand whether personal, business, or proprietary data is moving to AI systems in ways that create security, privacy, or compliance risk.

How do we inventory AI components and AI data flows across all mobile apps?

NowSecure recommends building the inventory through continuous mobile app testing across first-party and third-party apps.

The inventory should capture AI models, local AI files, AI libraries, SDKs, AI-powered services, endpoint URLs, API keys, vendors, teams responsible for the functionality, and data flows observed at runtime.

NowSecure AI Discovery helps organizations identify which apps use AI, where AI enters through code or third-party components, what data AI systems can access or move, and which apps require deeper review or remediation.

Why do security teams need AI governance controls for mobile apps now?

Security teams need AI governance controls now because AI is rapidly entering mobile apps through features, generated code, third-party SDKs, embedded services, and external AI endpoints.

NowSecure notes that AI introduces new security, privacy, reputation, regulatory, and compliance risks, especially when sensitive data is transmitted to models or unauthorized AI services.

Mobile apps are uniquely exposed because they handle personal data, authenticate users, connect to APIs, and include many third-party components. Without governance controls, teams may miss shadow AI, hardcoded AI keys, insecure integrations, and unapproved data flows until after release.

How do AI governance controls work in a mobile app release process?

In a mobile release process, AI governance controls should run continuously from development through release.

NowSecure recommends testing each build for AI endpoints, SDKs, local models, hardcoded API keys, sensitive data flows, insecure transmissions, and third-party AI behavior.

Findings should be prioritized by security, privacy, and compliance impact, routed to developers with remediation guidance, and retested before release. For authenticated app areas, NowSecure AI-Navigator can automate login and navigate real workflows so testing reaches the places where data is collected, processed, stored, and shared.

What controls should an AI governance program include for mobile apps and third-party AI SDKs?

A mobile AI governance program should include AI discovery, third-party SDK analysis, runtime data-flow testing, endpoint monitoring, API key protection, model and local file inventory, policy enforcement, secure storage, encryption, regulatory mapping, and audit reporting.

NowSecure also recommends tracking where AI endpoints are hosted, identifying local models and AI libraries, securing sensitive data transmission, and testing against OWASP MASVS for broader mobile privacy, resilience, networking, cryptography, authentication, storage, and code risks.

These controls should apply to both first-party AI features and AI introduced through SDKs, open-source components, or supply chain dependencies.

How do we manage regulatory, compliance, and third-party AI SDK risks in mobile apps?

NowSecure recommends managing these risks by continuously identifying AI SDKs, libraries, endpoints, local models, vendors, and runtime data flows across the mobile app ecosystem.

Teams should validate whether AI use is disclosed, authorized, licensed, secure, and compliant with privacy, procurement, app store, and regulatory expectations.

Third-party SDKs deserve special attention because they can introduce AI functionality or data sharing outside direct engineering control. Dynamic testing is critical to determine whether sensitive data is transmitted, stored insecurely, sent across jurisdictions, or exposed through unencrypted connections or hardcoded API keys.

Who should own AI governance for mobile apps across security, privacy, compliance, and engineering?

NowSecure recommends shared ownership with clear accountability.

Security should own technical risk testing, AppSec should integrate controls into mobile release workflows, privacy should define data-use and disclosure requirements, compliance should map regulatory and audit obligations, and engineering should remediate issues and control SDK adoption. Product and business owners should approve AI use cases and acceptable data flows.

Because AI risk crosses security, privacy, compliance, procurement, and development, governance should operate as a coordinated program supported by continuous evidence from mobile app testing rather than as a one-time checklist.

How can we test whether AI features in mobile apps are secure, compliant, and not collecting unauthorized data?

NowSecure recommends testing AI-enabled mobile apps with a combination of static analysis, dynamic runtime testing, authenticated workflow testing, API and network inspection, SDK analysis, and sensitive data-flow validation.

Teams should identify AI endpoints, local models, AI libraries, hardcoded API keys, unencrypted connections, insecure integrations, and unauthorized transmission of personal or business data.

Testing should also confirm where data is hosted, whether disclosures match actual behavior, and whether app behavior aligns with OWASP MASVS, privacy, procurement, and compliance requirements. Authenticated dynamic testing is especially important because major risks often appear only after login.

How do we build an AI governance framework for mobile apps that use AI features or AI SDKs?

At NowSecure, we recommend starting with AI discovery, then building enforceable controls into the mobile SDLC.

Inventory AI features, SDKs, local models, endpoints, API keys, vendors, data flows, and ownership. Define policies for approved AI use, sensitive data handling, licensing, disclosures, jurisdiction, and third-party components.

Continuously test apps at build time, runtime, and after authentication to uncover shadow AI, insecure transmissions, hidden SDK behavior, and unauthorized data collection. Prioritize findings by security, privacy, and compliance impact, remediate before release, retest fixes, and preserve evidence for audits and governance reviews.